In the world of forensic code analysis, my daily routine involves tearing down malicious payloads. But over the last few years, massive data harvesting hasn't required a zero day exploit. It’s happening through the platforms we use every day. As a malware analyst, I view this through the lens of data sovereignty.

In this breakdown, we reverse engineer Meta's AI gathering strategy and dissect the dark patterns designed to keep your data flowing. For foundational knowledge on locking down your personal data, check out our guide to digital footprint minimization.

The Anatomy of the Meta AI Data Grab

In mid 2024, Meta announced plans to train its LLMs using public content shared by adults on Facebook and Instagram since 2007. Every photo, comment, and status update became potential fodder for their algorithms.

from a forensic standpoint, this is "Living off the Land" (LotL). Meta already possesses the world’s largest reservoir of interaction data; they simply changed the rules of engagement.

The "Legitimate Interest" Loophole

Rather than asking for explicit consent (opt in), Meta claimed that training their AI models constituted a "legitimate interest" under Article 6(1)(f) of the GDPR. This Maneuvering allowed them to assume consent by default, placing the burden of objection on the user.

The Resistance: NOYB and Regulatory Pushback

The backlash was swift, led by NOYB (None of Your Business). They filed 11 complaints across Europe, Treat Meta’s policy change like a critical security vulnerability. The pressure eventually forced Meta to "pause" using European user data for AI training.

For a deeper understanding of how international data laws protect you, read our detailed breakdown of GDPR and CCPA privacy frameworks.

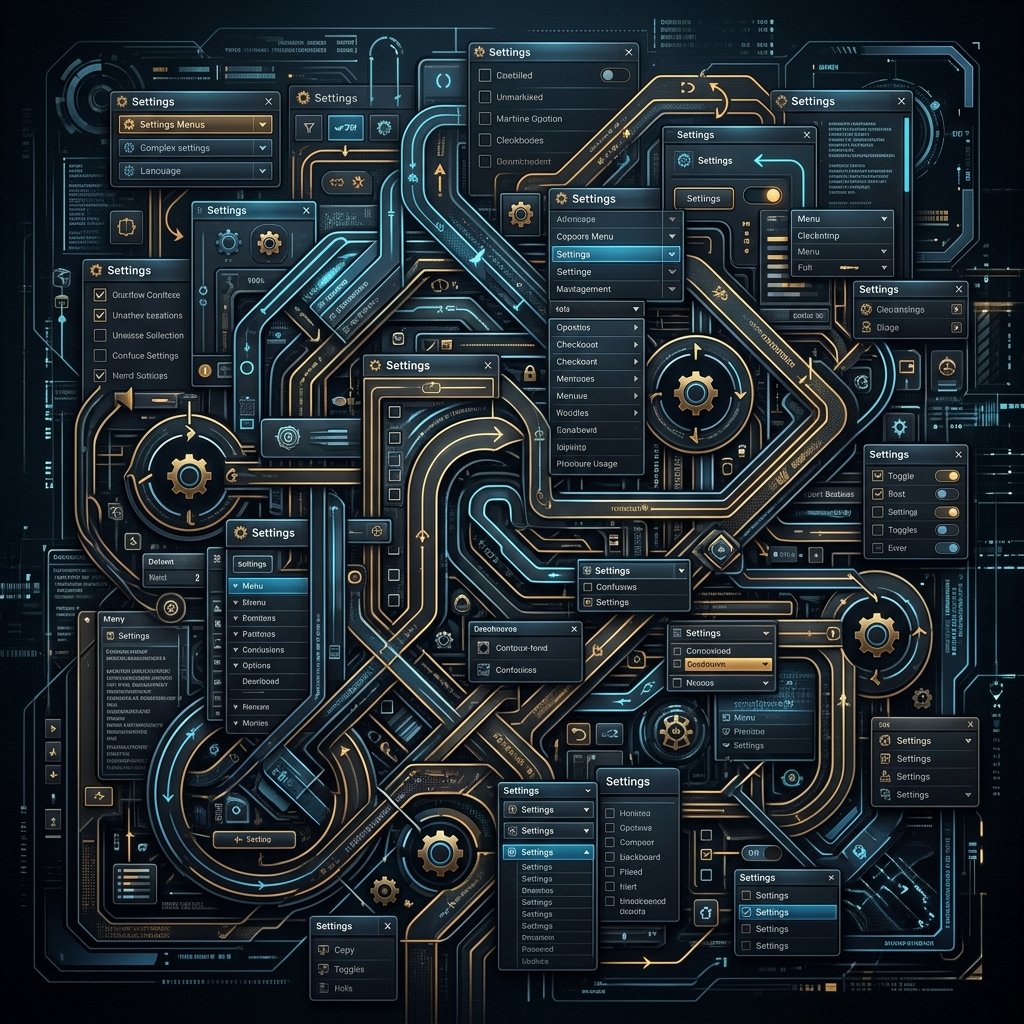

Reverse Engineering the "Opt Out" Dark Patterns

In UX design, a "dark pattern" is crafted to trick or exhaust users. As someone who routinely decompiles obfuscated code, I found the architecture of Meta’s opt out process remarkably familiar. It was designed to be technically compliant, yet practically inaccessible.

Actionable Steps: How to Opt Out of Meta AI Training

Here is the surgical extraction method for limiting Meta's AI access:

On Instagram:

- Open Profile > Settings & Privacy > Privacy Centre.

- Select AI at Meta.

- Tap Submit an objection request and enter your assertive statement.

On Facebook:

- Navigate to Settings & Privacy > Privacy Center.

- Locate the How Meta uses information... section.

- Click the Right to Object link and fill out the form.

For advanced strategies on locking down communication channels, review our guide to securing your social media privacy settings.

The Escalation: When AI Scraping Leads to Real World Threats

When AI models ingest massive amounts of personal data, they become adept at generating synthetic identities and hyper personalized spear phishing campaigns. Threat actors no longer need to hack you; they can simply query a model that has already digested your digital life.

If you suspect your footprint has been weaponized, we highly recommend the services of Trusted Private Investigators specializing in cyber forensics for identity theft attribution and recovery.

Conclusion

The fight against corporate AI data scraping is the new frontier of cybersecurity. Meta’s tactics prove that our digital data has immense value. By understanding their tactics and utilizing your opt out rights, you act as your own best firewall. Stay vigilant, stay skeptical, and protect your footprint.